Building a Resilient Event-Driven Validator on Google Cloud

The Objective

In this technical sprint, I architected and deployed a serverless validation pipeline designed to handle high-velocity POS (Point of Sale) data. The goal was simple: ensure data integrity at scale while creating an "intelligence layer" for future automation.

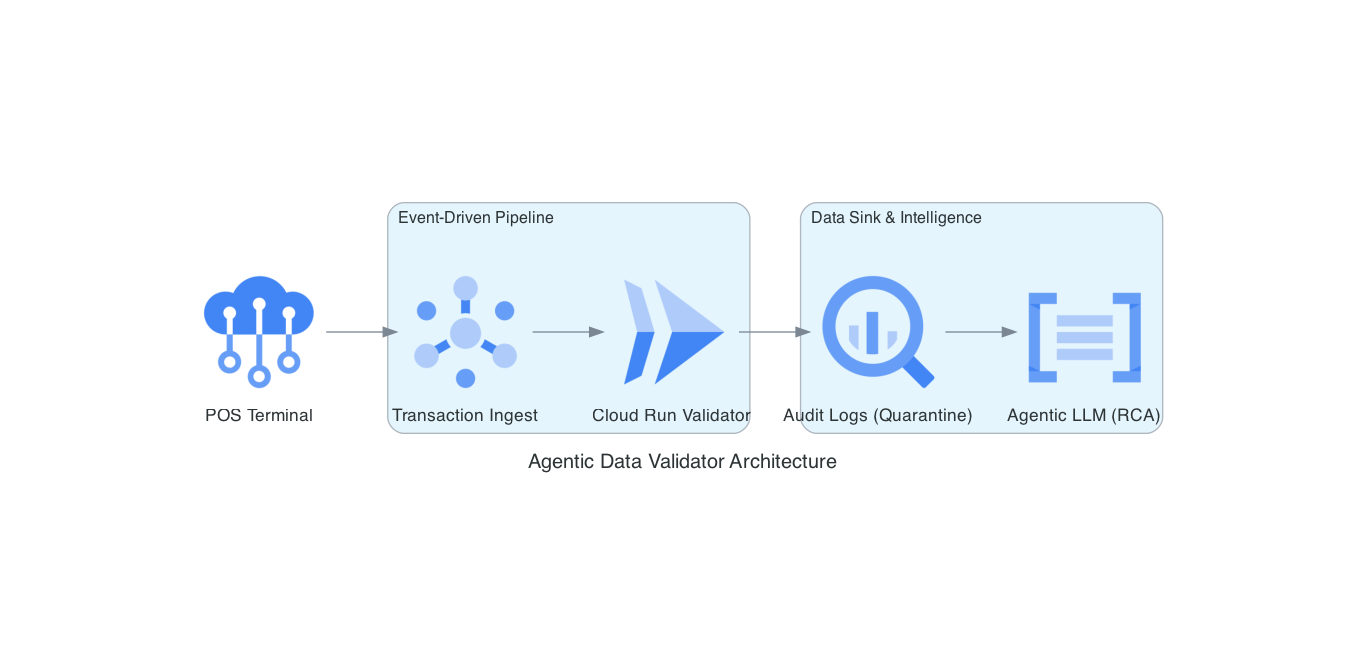

Here is the architecture diagram

The Architecture

The system leverages a decoupled, event-driven design on Google Cloud Platform (GCP):

Ingestion: Real-time data enters via Pub/Sub, ensuring the system can buffer spikes in traffic.

Validation: A Python-based microservice running on Cloud Run acts as a "sensory gatekeeper," validating schemas and identifying anomalies in milliseconds.

Audit Sink: Clean data moves to production, while malformed data is shunted to a structured BigQuery "Quarantine" table.

See it in Action

I executed a 100-transaction burst to stress-test the pipeline's shunting logic. You can see the logs and the final BigQuery output in the walkthrough below:

The Path Forward: Agentic AI

This isn't just a dead-letter queue. By shunting anomalies into a structured BigQuery sink, we’ve prepared the data for an Agentic LLM. The next phase involves using AI agents to perform automated root-cause analysis on these logs, moving from reactive monitoring to proactive system healing.